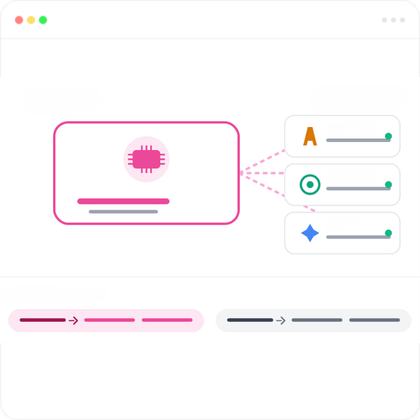

Arke LLM is our domain-tuned language model running entirely on your own servers and GPUs — fully on-premise, fully under your control. When you need frontier intelligence, connect Claude, GPT, and Gemini via API in the same orchestration layer. The right model for the right task — without compromising sovereignty.

%20(3).png)

Arke LLM is built to live inside your infrastructure. Deploy it on your own data center hardware, your private cloud, or air-gapped environments — no internet connection required at runtime. Every inference happens on your GPUs. Every token stays within your network perimeter. Sensitive data never reaches a third-party server, because there is no third-party server.

On-premise sovereignty doesn't mean isolation from the world's best AI. Connect Claude, GPT, and Gemini through their APIs — all managed in the same Arketic orchestration layer. Route sensitive data to your on-prem Arke LLM. Send general tasks to frontier models for maximum capability. Your governance rules decide what goes where, automatically.

.png)

Generic models speak generic language. Arke LLM speaks yours. We tune the model on your industry's terminology, your company's documents, your regulatory context, and your operational reality. The result: more accurate responses, fewer hallucinations, better compliance — and a model that actually understands what your business is talking about.

Arke LLM is Arketic's domain-tuned large language model designed to run entirely on your own infrastructure. Unlike API-based models from OpenAI, Anthropic, or Google, Arke LLM lives on your servers, uses your GPUs, and never sends data outside your network. It's purpose-built for organizations that need state-of-the-art language AI without sacrificing data sovereignty.

Yes — and most of our customers do. Arketic's orchestration layer connects Arke LLM with frontier models from Anthropic, OpenAI, and Google through their APIs. You define routing rules: sensitive or regulated data stays on-prem with Arke LLM, while general tasks can leverage frontier models for maximum capability. One platform, one governance layer, multiple models.

Arke LLM is optimized for modern GPU infrastructure. Recommended configurations include NVIDIA A100, H100, or L40 GPUs, with the exact sizing depending on your model variant and expected throughput. Our deployment team works with your IT to right-size the infrastructure based on your usage patterns. We support both single-node and multi-node deployments for high-availability scenarios.

Frontier models like Claude and GPT remain the most capable for general-purpose reasoning. Arke LLM is designed for a different goal: domain accuracy and data sovereignty. After fine-tuning on your industry corpus, Arke LLM often outperforms general models on tasks specific to your domain — legal contract analysis, regulatory interpretation, technical documentation in your terminology. The hybrid approach gives you the best of both worlds.

Updates happen on your terms. New base model versions, security patches, and capability improvements are delivered as deployable packages — your IT team controls when and how to apply them. Continuous fine-tuning on your operational data happens entirely within your infrastructure, so the model gets smarter about your business without ever sending data outside your network.